By Mike Hearn

The whooping cough ripped through the hospital like wildfire.

It started with an internist and spread from there, with a severe cough quickly developing in other healthcare workers. Whilst not deadly for healthy adults the disease can be fatal for the elderly, the frail and very young children, so the health system moved quickly. There was no time to lose — within weeks over 1,000 staff were furloughed and quarantined. 142 people tested positive for the disease, thousands of people were given antibiotics and ICU beds were closed. It was a swift and effective response by highly trained public health professionals, armed with the best tools modern medicine could provide.

Only one thing went wrong.

Gina Kolata’s story in the New York Times about what happened in 2006 at Dartmouth-Hitchcock Medical Center makes for astonishing reading. I can’t recount it better than she did — you should really just go and read it right now. But if you’d rather not, I’ll quote some of the most important parts (emphasis mine):

Not a single case of whooping cough was confirmed with the definitive test, growing the bacterium, Bordetella pertussis, in the laboratory. Instead, it appears the health care workers probably were afflicted with ordinary respiratory diseases like the common cold.

… specialists say the problem was that they placed too much faith in a quick and highly sensitive molecular test that led them astray … At Dartmouth the decision was to use a test, P.C.R., for polymerase chain reaction … their sensitivity makes false positives likely, and when hundreds or thousands of people are tested, as occurred at Dartmouth, false positives can make it seem like there is an epidemic.

There are no national data on pseudo-epidemics caused by an overreliance on such molecular tests, said Dr. Trish M. Perl … but, she said, pseudo-epidemics happen all the time. The Dartmouth case may have been one the largest, but it was by no means an exception, she said. There was a similar whooping cough scare at Children’s Hospital in Boston last fall that involved 36 adults and 2 children. Definitive tests, though, did not find pertussis.

“Because we had cases we thought were pertussis and because we had vulnerable patients at the hospital, we lowered our threshold,” she said.

“If we had stopped there, I think we all would have agreed that we had had an outbreak of whooping cough and that we had controlled it,” Dr. Kirkland said.

“It’s a problem; we know it’s a problem,” Dr. Perl said. “My guess is that what happened at Dartmouth is going to become more common.”

Circular logic in medicine

This story is of vital importance in current events because RT-PCR tests are what’s being used as the standard COVID test, yet the problem of pseudo-epidemics is hardly being discussed. Rapid testing to see if you have a virus is a very new capability, which is one reason the world has struggled with scaling up test capacity.

“The big message is that every lab is vulnerable to having false positives,” Dr. Petti said. “No single test result is absolute and that is even more important with a test result based on P.C.R.”

This is hardly a controversial statement; it’s true for most kinds of scientific measurement. But despite being an anodyne and obvious claim, in the case of COVID it has not only become a controversial idea — it has been entirely rejected by governments and the medical establishment. Worse still, anyone who raises questions about the true severity of COVID tends to get an answer of, “I guess you don’t care about the hundreds of thousands of deaths it caused”. But if the tests are wrong then so are death counts, and thus this sort of answer is missing the point.

The problem is simple but vicious circular logic. In the 2006 case sanity was able to reassert itself because there was a much slower test which was considered reliable. It defined what we call the ground truth: the final arbiter of reality. PCR tests could be cross-checked against the ground truth after enough time had passed, allowing the big reveal that the error rate on the PCR was 100%.

But with COVID-19 there is no other test. And it’s a “new” virus, whose exact effects are unknown, so it was decided that the obvious choice of using symptoms as the ground truth would be taking too much risk.

In this situation something new and very nasty has happened. The PCR test has itself become the ground truth. Despite having had an error rate of 100% in the past, it now by definition has an error rate of zero.

Test positive? You’ve got the virus, end of story. Feel fine? Irrelevant, it’s an asymptomatic infection. No antibodies? Doesn’t matter, surely you’ll have them soon. Never develop antibodies but start testing negative anyway? How mysterious, perhaps you have a new form of immunity previously unknown to science. Test positive, then negative, then positive? Terrifying: the body must not develop immunity like it does for other viruses. How about negative, positive, negative, positive, negative? Well, we’ll call that a positive just to be safe. Symptoms stopped months ago but the positive tests keep coming? It can’t be the test, you’ll just have to stay confined indefinitely. And so on.

Many of the explanations being put forward for COVID PCR timeseries require new medical theories to be invented on the spot, some of which are absurd. This is exactly what would be expected to happen in an environment where a noisy test has been mandated to be interpreted as error free. There’s a large and growing amount of evidence that COVID testing may be unreliable, just like it was at Dartmouth-Hitchcock and other places. But stripped of their ability to say “that’s a false positive” by the monopoly of a test for which no other ground truth exists, public health bodies have become increasingly erratic. In 2006 the only thing that saved them from believing they’d controlled a non-existent epidemic was an accurate ground truth test. Without that the medical experts would have come to resemble schizophrenics, believing they were fighting an invisible undefeatable enemy that they saw signs of everywhere even though it wasn’t real. And the longer it went on for, and the more disruptive their actions became, the harder it’d have become to accept the truth — that they’d shut down the hospital and endangered patients for nothing.

That’s why in 2007 the staff told their story, to warn us of the dangers of PCR. But it didn’t work; their warnings went unheard.

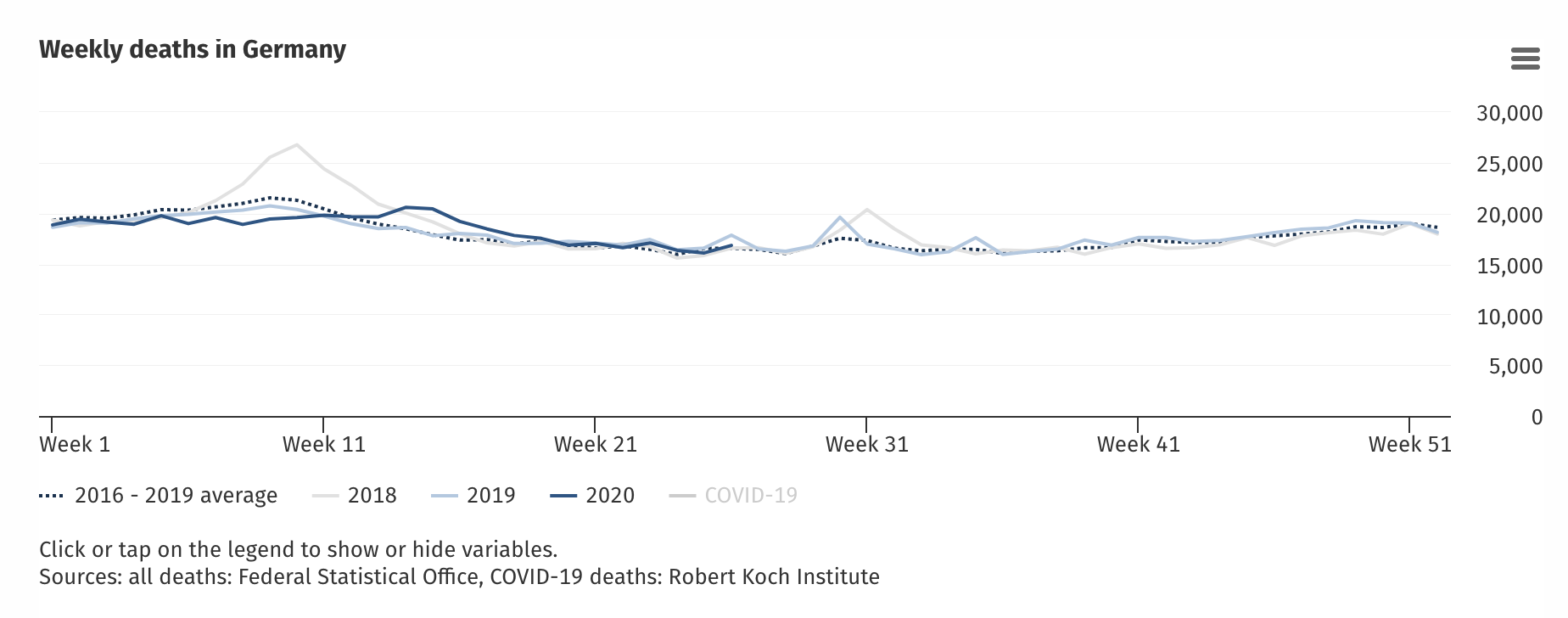

To be clear, I’m not arguing today that COVID-19 is a pseudo-epidemic of the severity seen at Dartmouth-Hitchcock. For that to be true there’d have to be no true COVID cases anywhere at all, and that would require explaining the spikes in excess mortality seen in some countries, the unusual breathing difficulties and so on. It’s a topic for another day. For now, let’s observe that quite a lot of countries have actually seen no excess deaths whatsoever, even when claiming e.g. 200,000 cases. For example, Germany:

And also Austria, Denmark, Estonia, Finland, Greece, Hungary, Luxembourg, Malta and Norway, to name just a few. The question of why some countries have seen cases without excess deaths and others saw spikes is of course a hotly debated mystery, but each essay has limited space, so today I’d rather talk about false positives.

FP rates and their determination

The false positive rate on COVID testing is important. It matters because of the staggering amount of testing being done. In the USA around half a million tests are being done per day as of the time of writing, and public health experts are telling people that “subduing the virus” will require 4.3 million tests per day. This is a very large amount of testing. At these scales even low FP rates would cause a massive never ending pseudo-epidemic.

When I started researching this topic I thought I’d find lots of studies into the FP rates of COVID tests. In fact there’s very little.

One source is this report from Norway’s health authority in which they said to find one true positive they must test 12,000 people, 15 of which will be false positives and one will be the case they’re looking for.

But how do they know this, if the test is the ground truth? They don’t. It’s circular logic:

“In such situations, health professionals should not rely on a positive result until they have taken a new test to confirm it”

In other words they define a true positive as two positive tests. This isn’t a logically valid definition.

Consider the Dartmouth-Hitchcock scenario: the test is useless. The error rate is 100%. In that event “nearly 1000” people were tested (call it a round thousand), and 142 tested positive, but the true positive rate was zero. If we model the test as a biased coin then using Norway’s definition they’d have calculated the total number of infected as (142/1000)² = ~2%. The outbreak would have never ended.

Remember the figure of 2%. We’ll come back to it.

Raw FP rates can be misleading. Translated into percentages Norway is claiming their test has an FP rate of 0.125% which, putting the logical issues to one side for a moment, sounds very low indeed. But the signal they’re trying to detect is so tiny that the error rate ends up being 94% — very close to the 100% error rate of the “whooping cough” test.

A paper in the British Medical Journal attempts to dance around this completely illogical methodology:

The lack of such a clear-cut “gold-standard” for covid-19 testing makes evaluation of test accuracy challenging.

Inevitably this introduces some incorporation bias, where the test being evaluated forms part of the reference standard, and this would tend to inflate the measured sensitivity of these tests.

The paper also indicates the medical establishment’s views on PCR testing: that its real problem is false negatives and not false positives. This is because medical researchers are defining a false negative as a negative followed by a positive. What if the negative was correct and the positive was false? This mis-use of logic can convert false positives into false negatives, and because false negatives are deemed much worse than a false positive, this is then used as an excuse to use lower thresholds on the tests even further than was already done — that could yield even more false positives that are interpreted as even more false negatives, in a loop.

In fact in the BMJ paper the author doesn’t even discuss false positives, apparently in the belief that they don’t really happen. It’s only concerned with false negatives, due to reported rates of between 2% and 29%. (Such a huge variance on claimed false negative rates must lead us to question whether this is even the same test being studied at all).

Yet in the end, despite recognising that calibrating a test against itself is “challenging” (read: meaningless), the alternative would be to not have a test and that is unthinkable. Now mass-scale RT-PCR exists it must be used, whether it makes sense or not.

One way to determine an FP rate is to submit for testing samples you know can’t possibly be infected. The president of Tanzania memorably did this by submitting samples from goats and fruit to a lab, which proceeded to come back positive. He then fired his chief medical officer. Although he was ridiculed, scientists have done this sort of test before. Prior to COVID the most recent serious new coronavirus was MERS-CoV, which was propagating around 5–7 years ago. Researchers submitted a large variety of blinded samples to labs, some of which contained just regular coronaviruses instead of MERS-CoV. 8.1% percent of labs generated false positives because they couldn’t distinguish MERS-CoV from other harmless viruses (of the type that cause a common cold). NB: This is not the same as an 8.1% FP rate. The true FP rate if this had been scaled up would depend on the relative testing allocations. If all tested labs had equal capacity and samples were load balanced between them evenly, then it would be the FP rate. In real deployment heterogenous lab accuracy would show up as localised “hotspots” that didn’t really exist.

For 8% of labs to generate false positives is a very high number. To put it in perspective, an 8% FP rate for COVID in the USA would create a never ending pseudo-epidemic of about 600 deaths a day, forever, and (assuming the “suppression” target of 4.3 million tests per day) around 344,000 new fake cases per day even if the virus had entirely disappeared.

The true FP rate for COVID must be lower than that because in some countries where the epidemic is over the proportion of positive tests is more like 1%-2%, so that sets up upper limit on what any FP rate can be. But an 8% error rate would have been unusable for mass testing: the fact that this was happening just five years ago must raise the question of how many FPs the COVID testing regimes are yielding. Yet nobody knows the answer and some medical “experts” are pretending the tests have an FP rate of zero. This is delusional and should worry everyone. Left unchecked it would mean that mitigations never end.

Public health officials don’t understand data

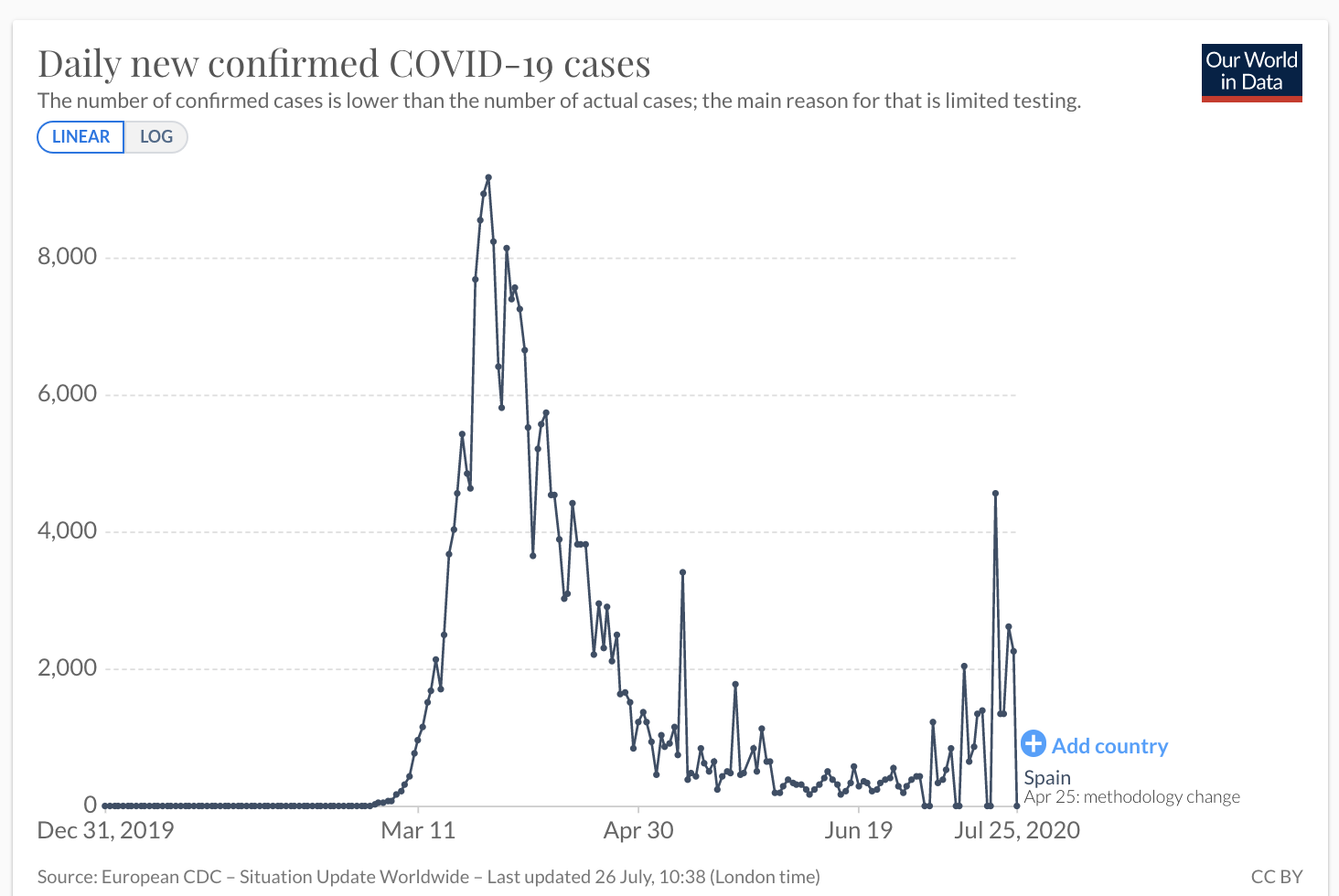

An example of the rampant confusion in governments over COVID data came yesterday in the form of a sudden decision that anyone returning from Spanish holidays to the UK would have to spend two weeks under house arrest. This is supposedly due to a sudden “second wave” of cases in Spain.

Yep, that’s a second wave alright, starting around maybe July 3rd.

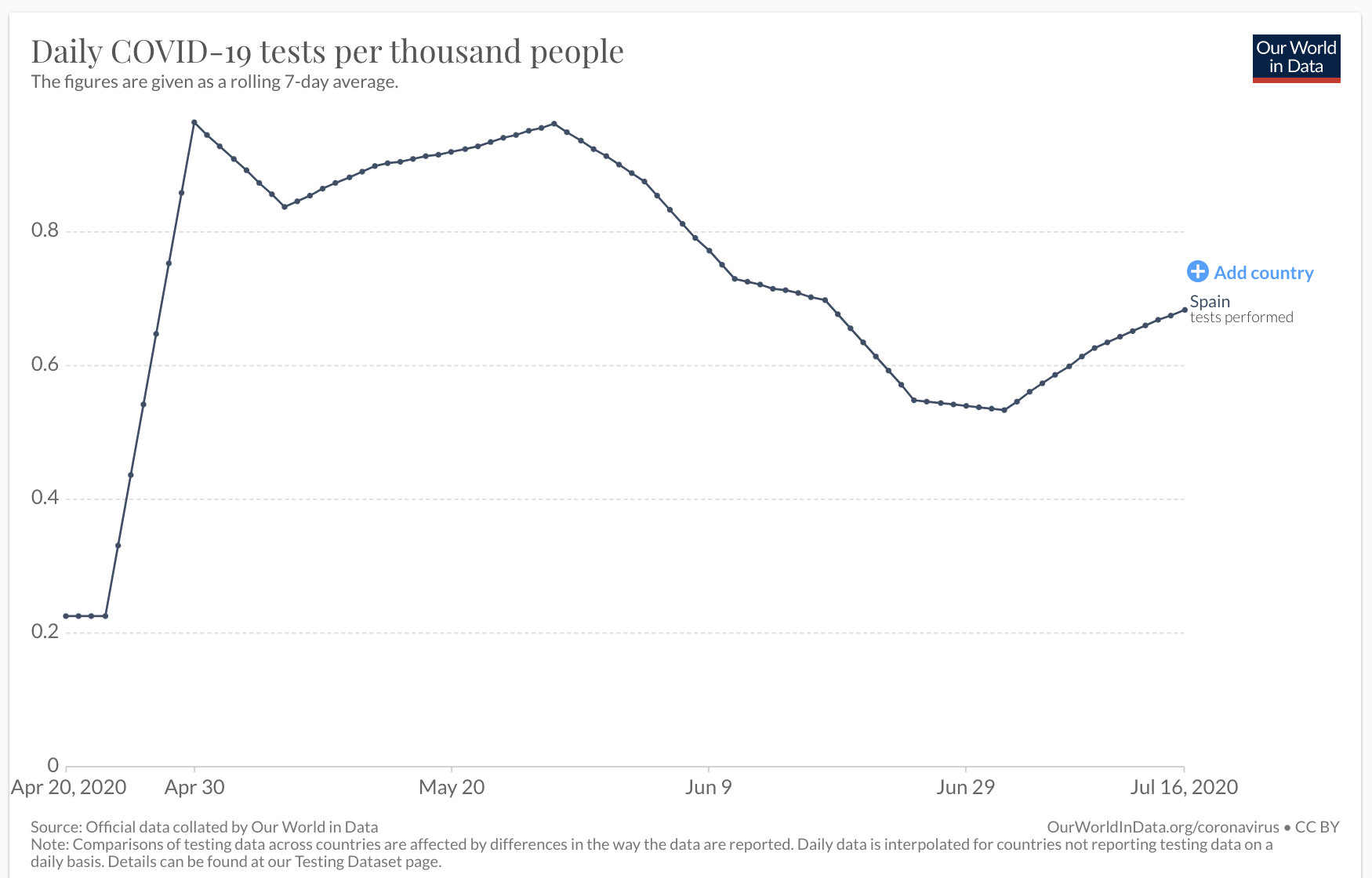

But how many tests is Spain doing? Is it possible they simply increased the number of tests they carried out? Surely that’s a basic question to ask?

Indeed they increased testing around July 3rd and cases started going up at exactly the same time. Now, this is just a correlation. It doesn’t automatically imply the increase in positive cases is due to a testing increase. If tests were triggered by some ground truth, like someone presenting with symptoms, then testing volumes would just be following the virus rather than the other way around, which is what we’d want to see.

Unfortunately the WHO has told countries to “test, test, test” so testing isn’t being driven by presentation of symptoms. We know this because so many tests come back as negative (if we assume someone presenting with COVID symptoms means they have COVID, of course, which is a whole other debate). Here’s the proportional graph:

Viewed this way the second wave is gone. There’s a tiny trend upwards after the last week, but a movement from 1.5% to 2.5% of tests returning positive is, as we’ve seen, with something like RT-PCR not necessarily meaningful. A roughly 2% FP rate is what you’d get with a Dartmouth-Hitchcock type problem using the “repeat it twice” technique. Even if we assume modern testing is much better, the numbers are so low it’s still in the danger zone.

Spain performs temperature checks on all new arrivals at airports. Probably what happened here is that people started arriving for holidays in large numbers. Some fraction of those people will fail a temperature check for whatever reason, could be all kinds of things, and those people will be given an RT-PCR test. And of those, some fraction will come back positive even if they’re not, because that’s just what tests do. That’s all you need to create a “second wave”.

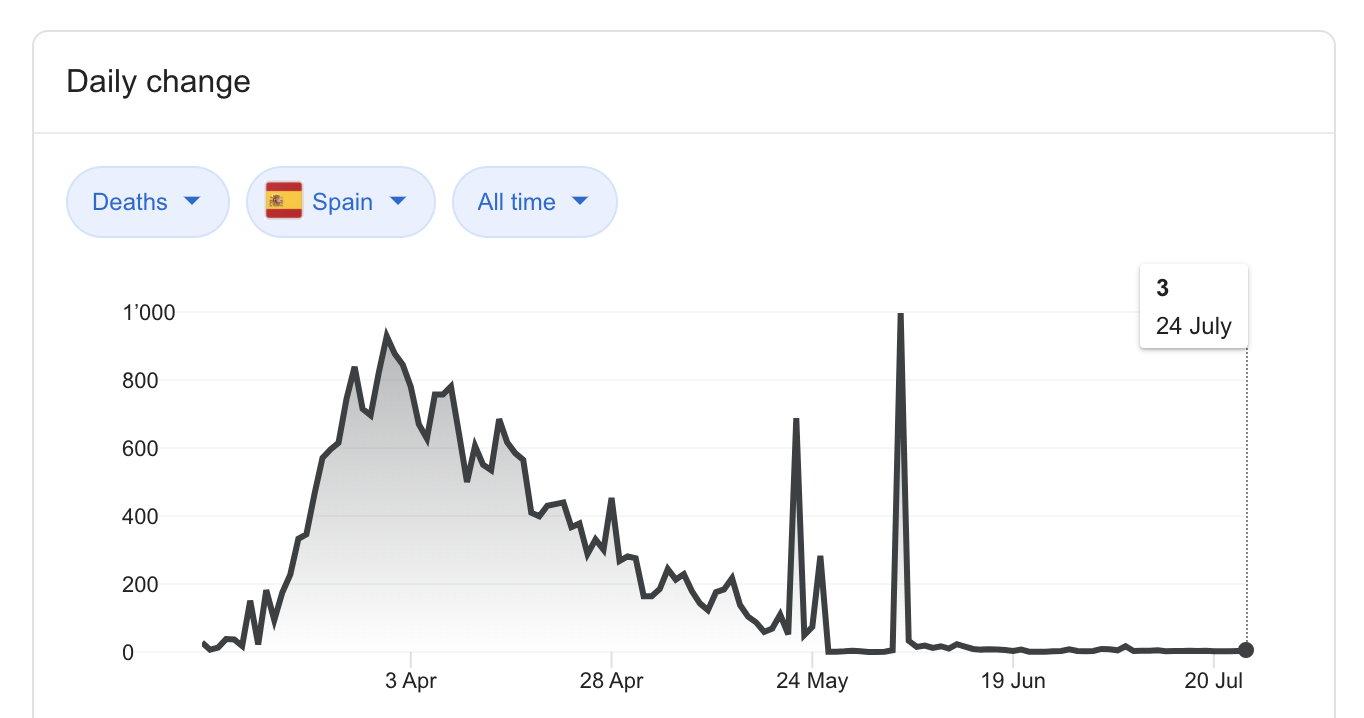

To repeat, I’m not claiming 100% of these new positives are false. There’s probably some people with COVID who don’t realise it mixed in there too. My point is that we don’t actually know that, because when attempting to detect a very weak signal false positives can rapidly overwhelm your true positives even if the FP rate isn’t actually very high. At the tail end of the epidemic the risk of FPs is especially high because as can be seen in the graph of deaths, COVID has actually disappeared a long time ago in Spain, so the signal is very weak indeed:

From 9th June there was basically no COVID-positive death in Spain, even though 1%–1.5% of tests were returning positive overall. It’s highly possible that this represents the noise floor of the tests.

Conclusion

COVID times have crushed my faith in government and academic health expertise, probably forever. So many problems have occurred, like modellers driving government policy despite being unable to actually program computers or predict epidemics. But one of the most depressing problems is the apparently universal assumption that false positives aren’t important and lockdowns are free.

Given current definitions COVID-19 will never end. People will be dying of it forever, even if the virus disappears completely. Worse still, the system is locked in a series of feedback loops — if something causes test numbers to rise then so will case numbers, which in turn will cause a further increase in testing, causing the rise to continue, triggering local lockdowns and pointless evidence free rituals, until people get depressed and stop trying to do things causing numbers being tested to fall again.

Health is run by people who suffer no consequences from policy over-reactions. Lockdown induced job losses won’t affect them, as they work for the government. A larger-scale case of “one rule for them and another for us” can’t be imagined. It’s thus no surprise when we read things like Public Health England defining a COVID death as anyone who has ever tested positive and then died, for any reason, at any time i.e. the UK being supposedly “one of the worst hit countries in the world” is a statistical fantasy. PHE officials defined it this way because they didn’t want to be accused of being a nasty libertarians who were underplaying the problem just to help capitalist workers. The idea that they’d create other, bigger problems simply didn’t occur to them — or worse, it did but they didn’t care.